Cut Your Data in Half. Your Models Won't Notice

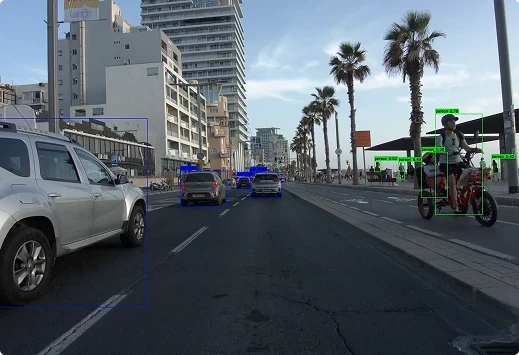

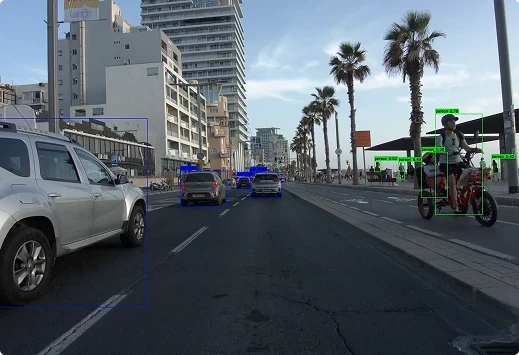

ML-Safe compression across the AV data lifecycle, validated for real-world and synthetic video

Cut Your Data in Half. Your Models Won't Notice

ML-Safe compression across the AV data lifecycle, validated for real-world and synthetic video

Cut Your Data in Half. Your Models Won't Notice

ML-Safe compression across the AV data lifecycle, validated for real-world and synthetic video

More sensors. More miles. More data than any pipeline was built for. We close the gap, frame by frame, without risking your model accuracy.

More sensors. More miles. More data than any pipeline was built for. We close the gap, frame by frame, without risking your model accuracy.

Recent Stories

Beamr to Demonstrate Video Data Processing for Resilient AV Models at Smart Mobility Summit

The demonstration of Beamr's ML-safe video data stack for autonomous vehicles (AV) will be held at the Smart Mobility Summit 2026, from May 17–18 at Expo Tel Aviv.

Read more→Beamr Research Validates Patented CABR Technology as an AI Training Asset

Training AI model on video data processed by Beamr’s content-adaptive technology made the model more resilient to compression, by lowering depth estimation error on safety-critical road users, including pedestrians and motorcyclists, by 30.7%

Read more→Compression with Proof: Article in Autonomous Vehicles International Magazine

Across the autonomous vehicle industry, compression is no longer optional. But how do you confirm that compressed video preserves ML model integrity across every scenario and pipeline stage?

Read more→Recent Stories

Beamr to Demonstrate Video Data Processing for Resilient AV Models at Smart Mobility Summit

The demonstration of Beamr's ML-safe video data stack for autonomous vehicles (AV) will be held at the Smart Mobility Summit 2026, from May 17–18 at Expo Tel Aviv.

Read more→Beamr Research Validates Patented CABR Technology as an AI Training Asset

Training AI model on video data processed by Beamr’s content-adaptive technology made the model more resilient to compression, by lowering depth estimation error on safety-critical road users, including pedestrians and motorcyclists, by 30.7%

Read more→Compression with Proof: Article in Autonomous Vehicles International Magazine

Across the autonomous vehicle industry, compression is no longer optional. But how do you confirm that compressed video preserves ML model integrity across every scenario and pipeline stage?

Read more→Questions We Get Asked a Lot

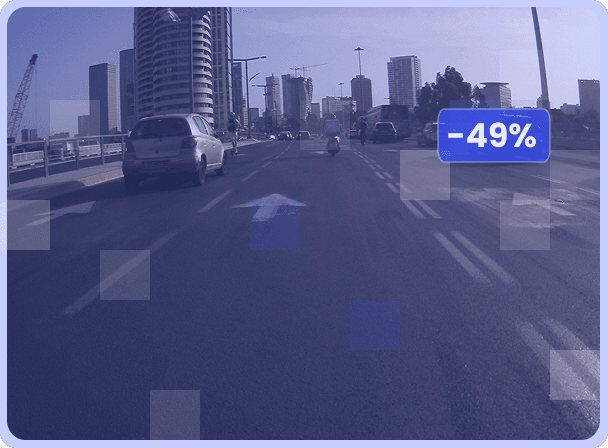

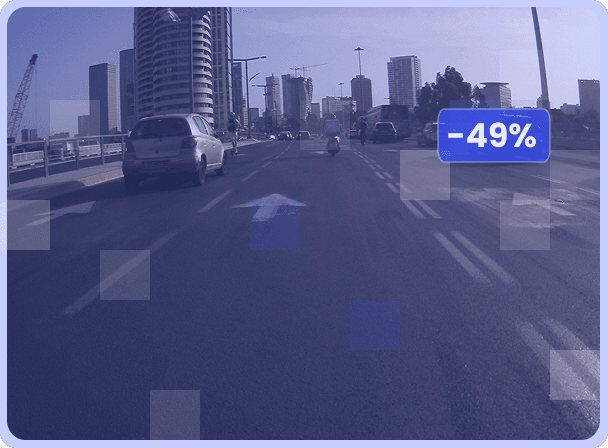

Your video data will be up to 50% smaller while preserving ML model accuracy. Smaller footprint means reduced storage and transfer costs, and improved I/O time. Beamr’s compression is validated across the ML pipeline with rigorous benchmarks verifying less than 2% difference in mean Average Precision (mAP) and robust results in industry-standard metrics.

he patented Content-Adaptive Bitrate (CABR) technology analyzes each frame individually, compressing as aggressively as the content allows while preserving the structural details ML models depend on — unlike standard methods that apply uniform compression parameters regardless of content.

You can ingest any input, output is in major codecs - AVC, HEVC, and AV1 - ensuring compatibility with existing decoders, and analytics tools. Deploys as a Docker container - cloud or on-prem - and integrates via SDK or FFmpeg plugin. If you're already running NVIDIA GPUs (Ampere or newer), you can use your infrastructure.

Yes. Book a demo and we'll run your content through Beamr's system. You see results on your actual data - not generic benchmarks.

Direct access to Beamr's engineering team: seasoned video experts who work with you from integration through optimization. No generic support tiers.

Beamr can be deployed within your own environment — nothing needs to leave your infrastructure. SOC 2® Type II compliant.

Per GB processed. No seat licenses. No upfront fees. You pay for what you compress.